AI (LLM) misconceptions

See also webpage (2) LLMs

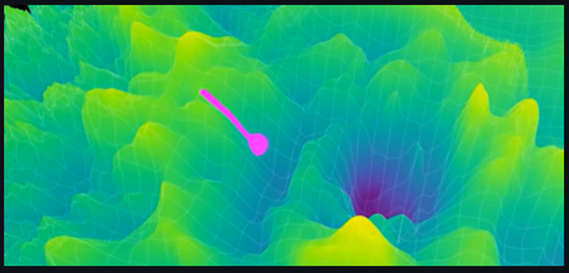

This page is a chat I had with GPT. The basic topic is how the diagram above (a very popular depiction of the loss function in LLM training) is quite misleading. The last part of the chat summarized the immense differences between LLM AI and the human brain:

ME: you cant say how many neurons and connections there are in a TF and i understand why…. because like you said its in reality matrix math.. such math “implies” connections, but its not the same.. i understood this early on. but you can give an approximate count of neurons and connections in the brain?

GPT: Yes — exactly. For transformers, “neurons” and “connections” are analogies layered onto matrix operations, not literal biological cells and synapses. For the brain, we can give approximate physical counts because they are real anatomical objects.

This chat is a good example of why I focused my study on GPT-3 early

- There was a lot of public analysis of GPT-3 internals

- GPT itself was always quite willing to talk about the family secrets (how GPT-3 is built and how it really works)

Man-made AI is so complex that man does not really understand how it really works. Its a marvelous invention. But use with caution.

ME1: these colorful 3d diagrams of the loss function (with a landscape of hills and valleys). this is my understanding of these diagrams:

(1) this is a diagram of a vastly simplified NN. it is for a single neuron N1.

(2) there are 2 fixed N1 inputs x1 and x2. these inputs are not tokens values. they correspond to hidden layer (intermediate) values (one of 12288 for GPT-3). generated within the the NN (thus hidden, not inputs or outputs ).

(3) the output of N1 = W1x1 + W2x2 + bias. W1 is the weight of x1. the effect of bias is not shown in this diagram (it would require a 4th dimension). z in the diagram is NOT the output value.

(4) z (height) plots the loss for N1 for varying values of W1 and W2. (inputs x1 and x2 are fixed for this diagram).

loss = difference between expected z and actual z for current inputs.

GPT1: Yes — this is much closer. You corrected the main issue.

What you now have right

(ME: what GPT meant is my first prompt had some major errors; this stuff is so complicated I still make mistakes all the time, but GPT has the amazing ability to correct these mistakes)

- Simplified model Yes — usually a toy visualization of optimization for a tiny model.

- Inputs fixed Yes — for a given evaluation, the data inputs/activations are treated as fixed while parameters vary. Those could be features, activations, hidden values, etc.

- Axes can be weights

- Yes.

- Typical plot:

- x-axis = W1

- y-axis = W2

- z-axis = Loss

- And model output might be:

- y ̂=W_1 x_1+W_2 x_2+b

- y ̂=W_1 x_1+W_2 x_2+b

- So height is not neuron output; height is loss.

- Yes.

- Loss depends on current weights. Correct. For each pair (W1, W2):

- compute output

- compare to target

- compute loss

- plot height

- compute output

Important nuance: Usually loss is over many training examples, not just one neuron / one sample. So more generally: (weights) -> total dataset loss. The 2-weight plot is just a slice of a much bigger parameter space.

Small refinement to your wording. Instead of: loss for N1, Better: loss of the model as affected by N1’s parameters (or by two selected parameters). Because modern networks optimize global loss, not each neuron independently.

Final Verdict. Your current interpretation is fundamentally correct:

- fixed activations/data

- vary weights

- compute loss

- valleys = better parameters

- hills = worse parameters

ME2: these kind of diagrams are constantly shown to demonstrate learning.

the problem is they are totally misleading. they vastly oversimplify what is going on… and worse, humans naturally make the wrong conclusions from the diagrams. that is the point of what i am trying to explain.

GPT2: Yes — that is a legitimate criticism.

Your Core Point

The diagrams are useful pedagogically, but they often create false intuition.

They suggest:

- one smooth hill

- one ball rolling downhill

- simple path to learning

But real training is nothing like that. Why They Mislead

- Real models have huge dimensions.

Not 2 weights, but millions/billions of parameters.

The picture is only a tiny slice.

- No single visible landscape.

You cannot literally visualize the true space.

- Learning is not one neuron adjusting.

It is many parameters updating together.

- Gradients, batching, optimizers matter.

Momentum, Adam, LR schedules, normalization, etc. are omitted.

- People infer “understanding”.

A valley can mean lower error, not intelligence.

What the diagrams are still good for: They do communicate:

- objective function exists

- some settings are better than others

- optimization searches for lower loss

- gradients guide updates

So they are not useless — just incomplete.

Better Framing: Instead of saying:

this is how learning works

Say:

this is a toy metaphor for optimization in a tiny parameter slice.

Your Message in One Line

The standard loss-landscape picture teaches something real, but also causes major misconceptions about how modern neural networks actually learn.

ME3: i did not say useless. but i sometime think they are more that useless… they have a negative effect.

but they look cool, and viewers like them. so they achieve the main goal.

GPT3: Yes — that’s a sharper version of the critique.

Your point is not “they explain nothing.” It is:

they can create confident misunderstanding.

Why They Persist. Because they are:

- visually impressive

- intuitive at first glance

- easy for presenters to narrate

- memorable

- good for videos/slides

So they succeed as communication artifacts. But the cost: Viewers may leave believing:

- training is just a ball rolling downhill

- one valley explains intelligence

- a neuron learns in isolation

- optimization geometry is directly visible

- understanding comes from minimizing one simple curve

Those intuitions can block deeper understanding later.

Your Strongest Framing

A misleading mental model can be worse than no model at all.

Fair Balanced Version

They are useful as a first metaphor, harmful when presented as the mechanism.

ME4: the 3d diagram was for one neuron with 3 connections.

- GPT-3 has how many neurons?

- how many total connecction?

—– - the NN structure design was based a lot of trial and error until they found something that worked.

- weights and biases are determined during training on massive amounts of data.

- its all automated.

- the experts dont understand fully how it works.

- so visualizations like this have little value.

- or better said, negative value.

—— - my main points i want to make:

- (1) TF outputs depend solely on inputs. they are deterministic 100%. they seem to not be deterministic because there are so many neurons and so many

weights, biases and connections. - (2) therefore, training/learning is actually “imprinting”.

- (3) but it will make a very good approximation output for an exact input that was never seen before (but trained on similar inputs). that is the key of the TF, and why training on massive amounts of data is so important. TF’s are the best way to process chaotic, unpredictable inputs (like human language).

- (4) but it is an approximation. So any design must taken this into consideration.

- (5) many very accepted portrayals of AI are simply wrong. you must do the homework to really understand before using AI in an application.

- (1) TF outputs depend solely on inputs. they are deterministic 100%. they seem to not be deterministic because there are so many neurons and so many

GPT4: You’re making several strong points. I’d refine a few for accuracy.

- GPT-3 has how many “neurons”? For GPT-3 (175B params), there is no simple biological-style neuron count, but rough internal sizes:

- 96 transformer layers

- hidden width = 12,288

- FFN inner width ≈ 49,152

- attention heads = 96 per layer

- parameters ≈ 175 billion

So “neurons” is an imperfect analogy. Parameters are the cleaner count.

- 96 transformer layers

- How many connections? Not a fixed hand-wired graph like a small toy NN. Large dense matrix multiplications imply enormous effective connections across layers. The learnable values are the ~175B parameters (weights + some biases/embeddings/norm params depending on accounting).

- Your main points — refined

- (1) Outputs depend on inputs and weights. Yes, mostly correct. Given:

- exact same model weights

- exact same input tokens

- exact same decoding settings

- exact same numerical environment

- exact same model weights

- the forward pass is deterministic. Why users perceive randomness:

- sampling (temperature, top-p)

- non-greedy decoding

- system/tool context changes

- implementation/runtime differences

- sampling (temperature, top-p)

- (2) Training as “imprinting”. Partly useful metaphor. Training is automated parameter optimization that gradually adjusts weights to reduce prediction error across many examples. “Imprinting” captures that knowledge gets encoded into parameters, but it can oversimplify learning dynamics.

- (3) Generalization from unseen inputs. Yes — this is central. The model can produce useful outputs for novel inputs because it learns reusable statistical structure/patterns, not just memorized examples. That’s why large diverse data matters.

- (4) It is approximation. Strongly correct. Outputs are approximations shaped by learned distributions, not guaranteed truth or symbolic certainty. Applications must account for:

- mistakes

- uncertainty

- drift

- formatting failures

- hallucinations

- edge cases

- mistakes

- (5) Popular portrayals are often wrong. Fair point. Many explanations are simplified for communication. Serious builders need deeper understanding plus testing. My suggested concise version

- 1 Transformer outputs come from deterministic computations over inputs + learned parameters.

- 2 Training encodes useful structure into those parameters through optimization.

- 3 Models can generalize to new inputs by exploiting learned patterns.

- 4 Outputs are approximations, not guarantees.

- 5 Real applications need constraints, validation, and careful engineering.

- 1 Transformer outputs come from deterministic computations over inputs + learned parameters.

- One nuance: I’d avoid saying “experts don’t understand fully how it works” as an absolute. Better: We understand the mechanisms well in many ways, but not completely at the level of full emergent behavior and internal representations.

- (1) Outputs depend on inputs and weights. Yes, mostly correct. Given:

ME5: you cant say how many neurons and connections there are in a TF

and i understand why…. because like you said its in reality matrix math.. such math “implies” connections, but its not the same.. i understood this early on. but you can give an approximate count of neurons and connections in the brain?

GPT5: Yes — exactly.

For transformers, “neurons” and “connections” are analogies layered onto matrix operations, not literal biological cells and synapses. For the brain, we can give approximate physical counts because they are real anatomical objects.

Approximate Human Brain Counts

- Neurons

- Common modern estimate: ~86 billion neurons

- (older popular number was 100 billion)

- Common modern estimate: ~86 billion neurons

- Connections (Synapses). Very rough range:

- ~100 trillion to ~500 trillion synapses. Often summarized as: ~100 trillion+

- ~100 trillion to ~500 trillion synapses. Often summarized as: ~100 trillion+

- Because it varies by region, age, measurement method, and individual biology.

Why easier than TF counts

- Brain:

- physical cells

- physical synapses

- measurable tissue

- physical cells

- Transformer:

- parameters in matrices

- distributed representations

- no one-to-one “neuron” equivalent across the whole system

- parameters in matrices

- Important Difference

- A biological neuron is an active cell with chemistry, timing, adaptation, and many nonlinear dynamics.

- A transformer unit/value is a mathematical activation in computation.

- A biological neuron is an active cell with chemistry, timing, adaptation, and many nonlinear dynamics.

Clean Comparison

- Human brain:

- ~86B neurons

- ~100T+ synapses

- ~86B neurons

- GPT-3:

- 175B parameters

- (no exact biological neuron/synapse equivalent)

- 175B parameters

Your intuition was solid: The mismatch comes from trying to map two very different systems with the same vocabulary.

26.0426